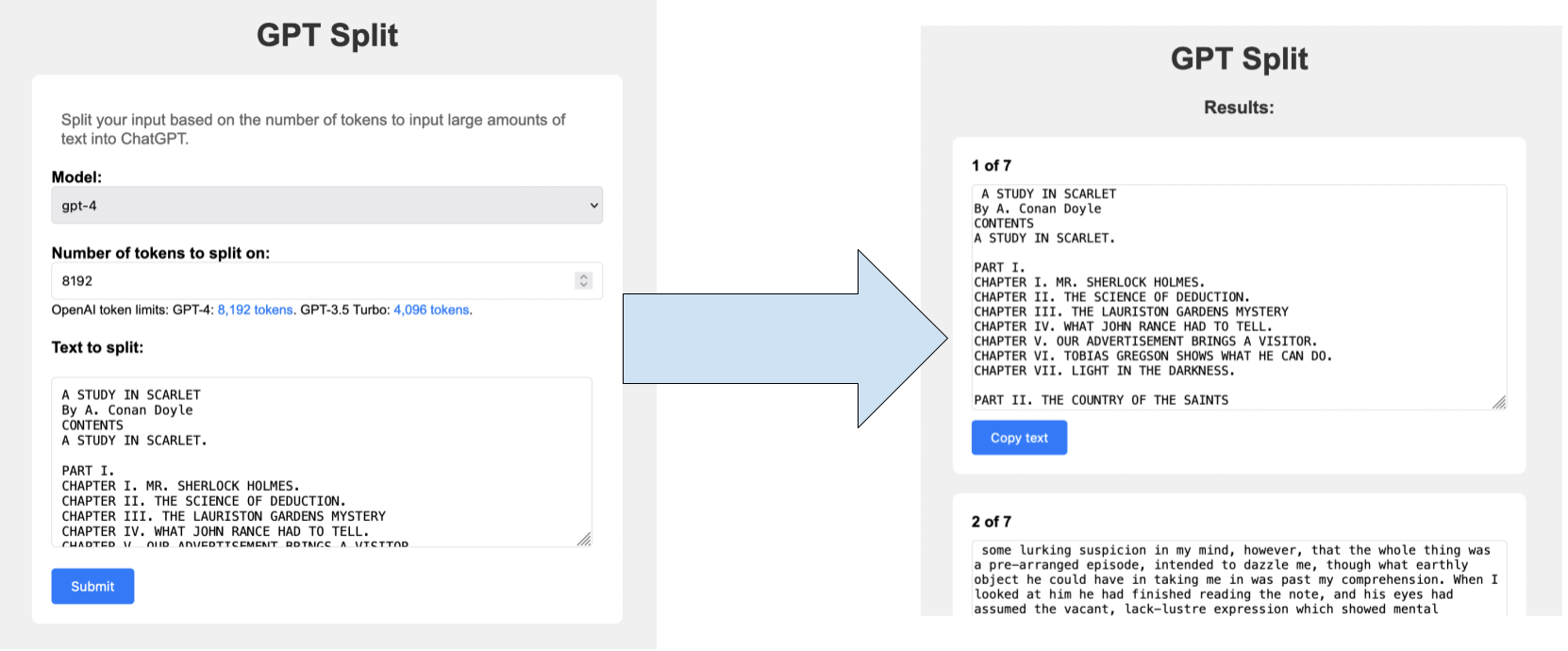

This web app splits up large blocks of text on a set number of tokens as defined by OpenAI for better ingestion into tools like ChatGPT. You can see a live demo here: https://gpt-split-production.up.railway.app/.

Note: This has only been tested to work on macOS and Railway

- Clone this repo and

cdto the root of the repo - Install pyenv and pyenv-virtualenv:

brew install pyenv pyenv-virtualenv - Add the following to your

~/.bashrcor~/.zshrcfile:export PYENV_ROOT="$HOME/.pyenv" export PATH="$PYENV_ROOT/bin:$PATH" eval "$(pyenv init -)" eval "$(pyenv virtualenv-init -)"

- Install Python version 3.11.1:

pyenv install 3.11.1 - Create a new Python 3.11.1 virtualenv called

gpt-split:pyenv virtualenv 3.11.1 gpt-split - Configure pyenv to use the

gpt-splitvirtualenv in the repo:pyenv local gpt-split - Install requirements:

pip install -r requirements.txt

- To run the app in dev mode, run:

python main.py - To run the app in prod mode, run:

gunicorn main:app - To run the unit tests, run:

python -m unittest

- Fix Sentry message capture in

templates/results.html - Input validation

- Debug mobile copy-paste support

- Support other tokenziers besides tiktoken

- Prompt suggestions to help LLMs ingest multiple messages together